| Copyright | (c) Douglas Burke 2019-2021 |

|---|---|

| License | BSD3 |

| Maintainer | dburke.gw@gmail.com |

| Stability | unstable |

| Portability | CPP, OverloadedStrings |

| Safe Haskell | None |

| Language | Haskell2010 |

Graphics.Vega.Tutorials.VegaLite

Description

This tutorial is inspired by - in that it starts off as a close copy of - the

Elm Vega-Lite walkthrough

created by Jo Wood, and

converted as necessary for the differences between hvega and

elm-vegalite.

The Elm tutorial is based on the talk given by

Wongsuphasawat et al at the 2017 Open Vis Conf.

The tutorial targets version 4 of the Vega-Lite specification and

the functionality provided in version 0.12.0.0 of hvega (although

a number of examples could be simplified by removing the

now-optional type information as of Vega-Lite 4.14).

Synopsis

- stripPlot :: VegaLite

- stripPlotWithBackground :: VegaLite

- stripPlotY :: VegaLite

- gaiaData :: Data

- stripPlotWithColor :: VegaLite

- stripPlotWithColor2 :: VegaLite

- stripPlotWithColorOrdinal :: VegaLite

- pieChart :: VegaLite

- pieChartWithCounting :: VegaLite

- parallaxBreakdown :: VegaLite

- simpleHistogram :: Text -> VegaLite

- parallaxHistogram :: VegaLite

- gmagHistogram :: VegaLite

- ylogHistogram :: VegaLite

- gmagHistogramWithColor :: VegaLite

- gmagLineWithColor :: VegaLite

- yHistogram :: VegaLite

- starCount :: VegaLite

- starCount2 :: VegaLite

- densityParallax :: VegaLite

- densityParallaxGrouped :: VegaLite

- pointPlot :: VegaLite

- posPlot :: VegaLite

- skyPlot :: VegaLite

- choroplethLookupToGeo :: VegaLite

- smallMultiples :: VegaLite

- smallMultiples2 :: VegaLite

- densityMultiples :: VegaLite

- basePlot :: VegaLite

- layeredPlot :: VegaLite

- layeredDiversion :: VegaLite

- layeredCount :: VegaLite

- skyPlotWithGraticules :: VegaLite

- concatenatedPlot :: VegaLite

- concatenatedPlot2 :: VegaLite

- concatenatedSkyPlot :: VegaLite

- repeatPlot :: VegaLite

- choroplethLookupFromGeo :: VegaLite

- splomPlot :: VegaLite

- selectionProperties :: Text -> Text -> [PropertySpec]

- singleSelection :: VegaLite

- nearestSelection :: VegaLite

- multiSelection :: VegaLite

- eventSelection :: VegaLite

- intervalSelection :: VegaLite

- intervalSelectionY :: VegaLite

- transformSelection :: VegaLite

- legendSelection :: VegaLite

- widgetSelection :: VegaLite

- bindScales :: VegaLite

- coordinatedViews :: VegaLite

- coordinatedViews2 :: VegaLite

- contextAndFocus :: VegaLite

- crossFilter :: VegaLite

- loessExample :: VegaLite

- regressionExample :: VegaLite

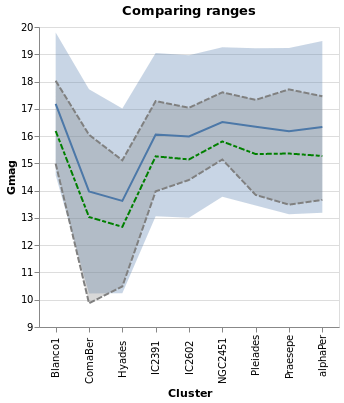

- errorManual :: VegaLite

- errorAuto :: VegaLite

- errorBars :: VegaLite

- errorBand :: VegaLite

- errorBox :: VegaLite

- comparingErrors :: VegaLite

- combinedPlot :: VegaLite

- duplicateAxis :: VegaLite

- compareCounts :: VegaLite

- parallaxView :: VegaLite

- skyPlotAitoff :: VegaLite

- clusterCenters :: VegaLite

A Grammar of Graphics

hvega is a wrapper for the Vega-Lite visualization grammar which itself is based on Leland Wilkinson's Grammar of Graphics. The grammar provides an expressive way to define how data are represented graphically. The seven key elements of the grammar as represented in hvega and Vega-Lite are:

Data- The input to visualize. Example functions:

dataFromUrl,dataFromColumns, anddataFromRows. Transform- Functions to change the data before they are visualized. Example functions:

filter,calculateAs,binAs,pivot,density, andregression. These functions are combined withtransform. Projection- The mapping of 3d global geospatial locations onto a 2d plane . Example function:

projection. Mark- The visual symbol, or symbols, that represent the data. Example types, used with

mark:Line,Circle,Bar,Text, andGeoshape. There are also ways to specify the shape to use for thePointtype, using theMShapesetting and theSymboltype. Encoding- The specification of which data elements are mapped to which mark characteristics (commonly known as channels). Example functions:

position,shape,size, andcolor. These encodings are combined withencoding. Scale- Descriptions of the way encoded marks represent the data. Example settings:

SDomain,SPadding, andSInterpolate. Guides- Supplementary visual elements that support interpreting the visualization. Example setings:

AxDomain(for position encodings) andLeTitleColor(for legend color, size, and shape encodings).

In common with other languages that build upon a grammar of graphics such as D3 and Vega, this grammar allows fine grain control of visualization design. Unlike those languages, Vega-Lite - and hvega in turn - provide practical default specifications for most of the grammar, allowing for a much more compact high-level form of expression.

The Vega-Lite Example Gallery provides a large-number of example visualizations that show off the capabilities of Vega-Lite. Hopefully, by the end of this tutorial, you will be able to create most of them.

How many Haskell extensions do you need?

The VegaLite module exports a large number of symbols,

but does not use any complex type machinery, and so it can be loaded

without any extensions, although the extensive use of the Text

type means that using the OverloadedStrings extension is strongly

advised.

The module does export several types that conflict with the Prelude, so one suggestion is to use

import Prelude hiding (filter, lookup, repeat)

A note on type safety

The interface provided by hvega provides limited type safety. Various

fields such as PmType are limited by the type of the argument (in this

case Measurement), but there's no support to check that the type makes

sense for the particular column (as hvega itself does not inspect the

data source). Similarly, hvega does not stop you from defining

properties that are not valid for a given situation - for instance

you can say toVegaLite []

Version 0.5.0.0 did add some type safety for a number of functions -

primarily encoding and transform - as the types they accept

have been restricted (to [ and EncodingSpec][

respectively), so that they can not be accidentally combined.TransformSpec]

Comparing hvega to Elm Vega-Lite

hvega started out as a direct copy of

elm-vegalite,

and has been updated to try and match the functionality of that package.

However, hvega has not (yet?) followed elm-vegalite into using

functions rather than data structures to define the options: for

example, elm-vegalite provides pQuant n which in hvega is the

combination of PName nPmType Quantitativehvega.

The top-level functions - such as dataFromUrl, encoding, and

filter - are generally the same. As the VegaLite schema has expanded

over time the differences between the two approaches has also grown.

Version 0.5.0.0 does introduce more-significant changes, in that

there are now separate types for a number of functions - such as

encoding, transform, and select - to help reduce the

chance of creating invalid visualizations.

What data are we using?

Rather than use the Seattle weather dataset, used in the Elm walkthrough

(if you go through the Vega-Lite Example Gallery

you may also want to look at different data ;-), I am going to use a

small datset from the Gaia satellite,

which has - and still is, as of early 2020 - radically-improved our knowledge

of our Galaxy. The data itself is from the paper

"Gaia Data Release 2: Observational Hertzsprung-Russell diagrams"

(preprint on arXiV)

(NASA ADS link).

We are going to use Table 1a, which was downloaded from the

VizieR archive

as a tab-separated file (aka TSV format).

The file contains basic measurements for a number of stars in

nine open clusters that all lie within 250 parsecs of the Earth

(please note, a parsec is a measure of distance, not time, no matter

what some ruggedly-handsome ex-carpenter

might claim). The downloaded file is called

gaia-aa-616-a10-table1a.no-header.tsv, although I have

manually edited it to a "more standard" TSV form (we Astronomers like

our metadata, and tend to stick it in inappropriate places, such as the

start of comma- and tab-separated files, which really mucks up

other-people's parsing code). The first few rows in the file are:

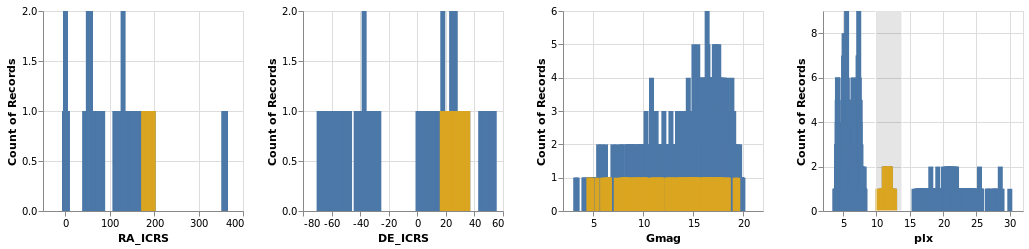

| Source | Cluster | RA_ICRS | DE_ICRS | Gmag | plx | e_plx |

|---|---|---|---|---|---|---|

| 49520255665123328 | Hyades | 064.87461 | +21.75372 | 12.861 | 20.866 | 0.033 |

| 49729231594420096 | Hyades | 060.20378 | +18.19388 | 5.790 | 21.789 | 0.045 |

| 51383893515451392 | Hyades | 059.80696 | +20.42805 | 12.570 | 22.737 | 0.006 |

| ... | ... | ... | ... | ... | ... | ... |

The Source column is a numeric identifier for the star in the Gaia database,

in this particular case the "DR2" release,

the Cluster column tells us which Star Cluster

the star belongs to, RA_ICRS and DE_ICRS

locate the star on the sky

and use the Equatorial coordinate system

(the ICRS term has a meaning too, but it isn't important for our

purposes),

Gmag measues the "brightness" of the star (as in most-things Astronomical,

this is not as obvious as you might think, as I'll go into below),

and the plx and e_plx columns give the measured

parallax of the star

and its error value, in units of

milli arcseconds.

And yes, I do realise after complaining about popular-culture references

confusing distances and time, I am now measuring distances with angles.

I think I've already mentioned that Astronomy is confusing...

Creating the Vega-Lite visualization

The function toVegaLite takes a list of grammar specifications,

as will be shown in the examples below, and creates a single JSON object

that encodes the entire design. As of hvega-0.5.0.0 this targets

version 4 of the Vega-Lite schema, but this can be over-ridden with

toVegaLiteSchema if needed (although note that this just changes the

version number in the schema field, it does not change the output to

match a given version).

There is no concept of ordering to these specification lists, in that

[ dataFromUrl ..., encoding ..., mark ...];

[ encoding ..., dataFromUrl ..., mark ... ];

and

[ encoding ..., mark ..., dataFromUrl ... ]

would all result in the same visualization.

The output of toVegaLite can be sent to the Vega-Lite runtime to

generate the Canvas or SVG output. hvega contains the helper

routines:

fromVL, which is used to extract the JSON contents fromVegaLiteand create an AesonValue;toHtml, which creates a HTML page which uses the Vega Embed Javascript library to display the Vega-Lite visualization;- and

toHtmlFile, which is liketoHtmlbut writes the output to a file.

A Strip Plot

In this section we shall concentrate on creating a single plot. Later on we shall try combining plots, after branching out to explore some of the different ways to visualize multi-dimensional data sets.

In the examples I link to symbols that have not been used in previous visualizations, to make it easier to see the use of new functionality.

Our first hvega plot

stripPlot :: VegaLite Source #

We could encode one of the numeric data fields as a strip plot where

the horizontal position of a tick mark is determined by the value

of the data item. In this case I am going to pick the "plx" column:

Open this visualization in the Vega Editor

toVegaLite[dataFromUrl"https://raw.githubusercontent.com/DougBurke/hvega/master/hvega/data/gaia-aa-616-a10-table1a.no-header.tsv" [TSV] ,markTick[] ,encoding(positionX[PName"plx",PmTypeQuantitative] []) ]

Notice how there is no explicit definition of the axis details, color choice or size. These can be customised, as shown in examples below, but the default values are designed to follow good practice in visualization design.

Three grammar elements are represented by the three functions

dataFromUrl, mark, and encoding.

The encoding function takes as a single parameter, a list of

specifications that are themselves generated by other functions. In

this case we use the function position to provide an encoding of the

"plx" field as the x-position in our plot. The precise way in which

the data value (parallax) is mapped to the x-position will depend on the type of

data we are encoding. We can provide a hint by declaring the

measurement type of the data field, here Quantitative indicating a

numeric measurement type. The final parameter of position is a list of

any additional encodings in our specification. Here, with only one

encoding, we provide an empty list.

As we build up more complex visualizations we will use many more encodings. To keep the coding clear, the idiomatic way to do this with hvega is to chain encoding functions using point-free style. The example above coded in this way would be

let enc = encoding

. position X [ PName "plx", PmType Quantitative ]

in toVegaLite

[ dataFromUrl "https://raw.githubusercontent.com/DougBurke/hvega/master/hvega/data/gaia-aa-616-a10-table1a.no-header.tsv" [TSV]

, mark Tick []

, enc []

]

Backgrounds

The default background color for the visualization, at least in the

Vega-Embed PNG and SVG output, is white (in Vega-Lite version 4;

prior to this it was transparent). In many cases this is

perfectly fine, but an explicit color can be specified using the

BackgroundStyle configuration option, as shown here, or with the

background function, which is used in the choropleth examples

below (choroplethLookupToGeo).

stripPlotWithBackground :: VegaLite Source #

The configure function allows a large number of configuration

options to be configured, each one introduced by the

configuration function. Here I set the color to be a light gray

(actually a very-transparent black; the Color type describes the

various supported color specifications, but it is generally safe to assume

that if you can use it in HTML then you can use it here).

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative ]

conf = configure

. configuration (BackgroundStyle "rgba(0, 0, 0, 0.1)")

in toVegaLite

[ dataFromUrl "https://raw.githubusercontent.com/DougBurke/hvega/master/hvega/data/gaia-aa-616-a10-table1a.no-header.tsv" [TSV]

, mark Tick []

, enc []

, conf []

]

If you want a transparent background (as was the default with Vega-Lite 3 and earlier), you would use

configuration(BackgroundStyle"rgba(0, 0, 0, 0)")

Challenging the primacy of the x axis

stripPlotY :: VegaLite Source #

There is nothing that forces us to use the x axis, so let's

try a vertical strip plot. To do so requires changing only

one character in the specifiction, that is the first argument to

position is now Y rather than X:

Open this visualization in the Vega Editor

let enc = encoding

. position Y [ PName "plx", PmType Quantitative ]

in toVegaLite

[ dataFromUrl "https://raw.githubusercontent.com/DougBurke/hvega/master/hvega/data/gaia-aa-616-a10-table1a.no-header.tsv" [TSV]

, mark Tick []

, enc []

]

Data sources

Since we are going to be using the same data source, let's define it here:

gaiaData = let addFormat n = (n,FoNumber) cols = [ "RA_ICRS", "DE_ICRS", "Gmag", "plx", "e_plx" ] opts = [Parse(map addFormat cols) ] in dataFromUrl "https://raw.githubusercontent.com/DougBurke/hvega/master/hvega/data/gaia-aa-616-a10-table1a.no-header.tsv" opts

The list argument to dataFromUrl allows for some customisation of

the input data. Previously I used [ to specify the data is in

tab-separated format, but it isn't actually needed here (since the

file name ends in ".tsv"). However, I have now explicitly defined how

to parse the numeric columns using TSV]Parse: this is because the columns

are read in as strings for this file by default, which actually doesn't

cause any problems in most cases, but did cause me significant problems

at one point during the development of the tutorial! There is limited

to no feedback from the visualizer for cases like this (perhaps I should

have used the Javascript console), and I only realised the problem thanks

to the Data Viewer tab in the

Vega Editor

(after a

suggestion from a colleague).

Data can also be defined algorithmically - using dataSequence and

dataSequenceAs - or inline - with dataFromColumns or

dataFromRows - or directly from JSON (as a Value) using

dataFromJson.

Examples showing dataFromColumns are the pieChart and skyPlotWithGraticules plots,

but let's not peak ahead!

Adding color as an encoding

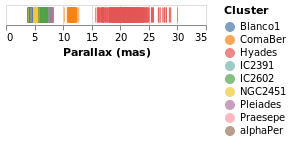

stripPlotWithColor :: VegaLite Source #

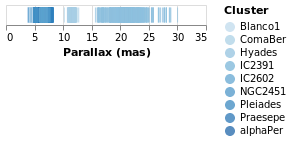

One question would be how the parallaxes vary by cluster: as parallax is measuring distance, then are the clusters similar distances away from us, or is there a range of values? A first look is to use another "channel" to represent (i.e. encode) the cluster:

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative, PAxis [ AxTitle "Parallax (mas)" ] ]

. color [ MName "Cluster", MmType Nominal ]

in toVegaLite

[ gaiaData

, mark Tick []

, enc []

]

Now each tick mark is colored by the cluster, and a legend is automatically

added to indicate this mapping. Fortunately the number of clusters in the

sample is small enough to make this readable! The color function has

added this mapping, just by giving the column to use (with MName) and

its type (MmType). The constructors generally begin with P for

position and M for mark, and as we'll see there are other property

types such as facet and text.

Vega-Lite supports several data types, represented

by the Measurement type. We have already seen Quantitative, which

is used for numeric data, and here we use Nominal for the clusters,

since they have no obvious ordering.

The labelling for the X axis has been tweaked using PAxis, in this

case the default value for the label (the column name) has been

over-ridden by an explicit value.

stripPlotWithColor2 :: VegaLite Source #

As of Vega-Lite version 4.14 we can now drop the type information when

it can be inferred. I am a little hazy of the rules, so I am going to

include the information (as it also means I don't have to change

the existing code!). However, as an example, we don't need to

add the MmType Nominal setting to the color channel, since the

following creates the same visualization as stripPlotWithColor:

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative, PTitle "Parallax (mas)" ]

. color [ MName "Cluster" ]

in toVegaLite

[ gaiaData

, mark Tick []

, enc []

]

Note that as well as removing MmType Nominal from the color encoding, I have

switched to the PTitle option (which is the same as PAxis [AxTitle ...].

Comparing Ordinal with Nominal data types

It is instructive to see what happens if we change the mark type for

the color encoding from Nominal to Ordinal.

stripPlotWithColorOrdinal :: VegaLite Source #

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative, PAxis [ AxTitle "Parallax (mas)" ] ]

. color [ MName "Cluster", MmType Ordinal ]

in toVegaLite

[ gaiaData

, mark Tick []

, enc []

]

As can be seen, the choice of color scale has changed to one more appropriate for an ordered set of values.

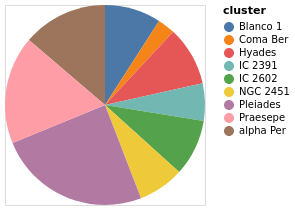

A Pie Chart

Before adding a second axis, let's temporarily look at another

"one dimensiona" chart, namely the humble pie chart.

The Arc mark type allows you to create pie charts, as well as more

complex visualizations which we won't discuss further in this

tutorial.

In this example we embed the data for the pie chart - namely the number

of stars per cluster - in the vsualization itself (using

dataFromColumns to create column data labelled "cluster" and

"count"). The position encoding is set to Theta, which is

given the star counts, and the color is set to the

Cluster name.

Open this visualization in the Vega Editor

let manualData =dataFromColumns[] .dataColumn"cluster" (Stringsclusters) . dataColumn "count" (Numberscounts) $ [] clusters = [ "alpha Per", "Blanco 1", "Coma Ber", "Hyades", "IC 2391" , "IC 2602", "NGC 2451", "Pleiades", "Praesepe"] counts = [ 740, 489, 153, 515, 325, 492, 400, 1326, 938] enc = encoding . positionTheta[PName "count", PmType Quantitative] . color [MName "cluster", MmType Nominal] in toVegaLite [ manualData , markArc[] , enc [] ]

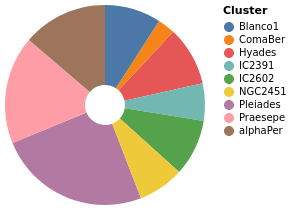

pieChartWithCounting :: VegaLite Source #

There are three main changes to pieChart:

MInnerRadiusis used to impose a minimum radius on the pie slices (so leaving a hole in the center);- the

ViewStyleconfiguration is used to turn off the plot edge; - and the count value is calculated automatically by the

PAggregatemethod (summing over the "Cluster" column), rather than having a hand-generated table of values encoded in the visualization.

Open this visualization in the Vega Editor

let enc = encoding

. position Theta [PAggregate Count, PmType Quantitative]

. color [MName Cluster, MmType Nominal]

in toVegaLite

[ gaiaData

, mark Arc [MInnerRadius 20]

, enc []

, configure (configuration (ViewStyle [ViewNoStroke]) [])

]

Adding an axis

While the strip plot shows the range of parallaxes, it is hard to

make out the distribution of values, since the ticks overlap. Even

changing the opacity of the ticks - by adding an encoding channel

like opacity [ MNumber 0.6 ]MOpacity

property of the mark - only helps so much. Adding a second

axis is easy to do, so let's see how the parallax distribution

varies with cluster membership.

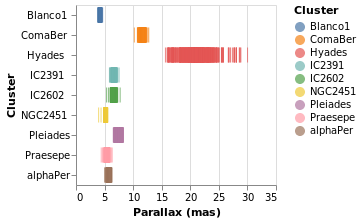

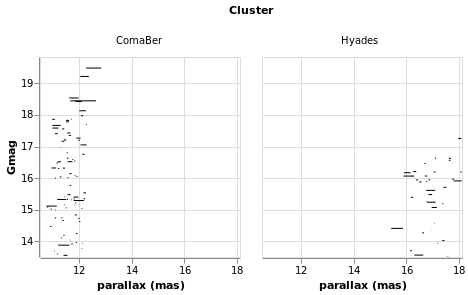

parallaxBreakdown :: VegaLite Source #

The stripPlotWithColor visualization can be changed to show two

variables just by adding a second position declaration, which

shows that the 7 milli-arcsecond range is rather crowded:

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative, PAxis [ AxTitle "Parallax (mas)" ] ]

. position Y [ PName "Cluster", PmType Nominal ]

. color [ MName "Cluster", MmType Nominal ]

in toVegaLite

[ gaiaData

, mark Tick []

, enc []

]

I have left the color-encoding in, as it makes it easier to compare to

stripPlotWithColor, even though it replicates the information provided

by the position of the mark on the Y axis. The yHistogram example

below shows how the legend can be removed from a visualization.

Creating a value to plot: aggregating data

We can also "create" data to be plotted, by aggregating data. In this case we can create a histogram showing the number of stars with the same parallax value (well, a range of parallaxes).

simpleHistogram :: Text -> VegaLite Source #

Since sensible (hopefully) defaults are provided for unspecified settings, it is relatively easy to write generic representations of a particular visualization. The following function expands upon the previous specifications by:

- taking a field name, rather than hard coding it;

- the use of

PBin[] - the addition of a second axis (

Y) which is used for the aggregated value (Count, which means that no column has to be specified withPName); - and the change from

TicktoBarfor themark.

Note that we did not have to specify how we wanted the histogram

calculation to proceed - e.g. the number of bins, the bin widths,

or edges - although we could have added this, by using a non-empty

list of BinProperty values with PBin, if the defaults are not

sufficient.

simpleHistogram :: T.Text -> VegaLite

simpleHistogram field =

let enc = encoding

. position X [ PName field, PmType Quantitative, PBin [] ]

. position Y [ PAggregate Count, PmType Quantitative ]

in toVegaLite

[ gaiaData

, mark Bar []

, enc []

]

parallaxHistogram :: VegaLite Source #

With simpleHistogram it becomes easy to get a histogram of the parallax

values:

Open this visualization in the Vega Editor

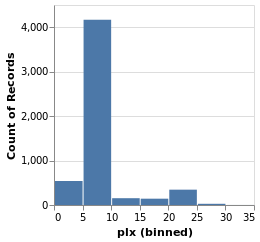

parallaxHistogram = simpleHistogram "plx"We can see that although parallaxes around 20 to 25 milli-arcseconds

dominated the earlier visualizations, such as stripPlotWithColor,

most of the stars have a much-smalled parallax, with values

in the range 5 to 10.

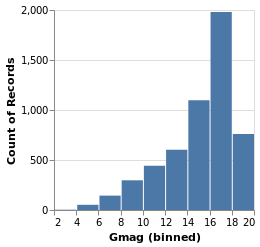

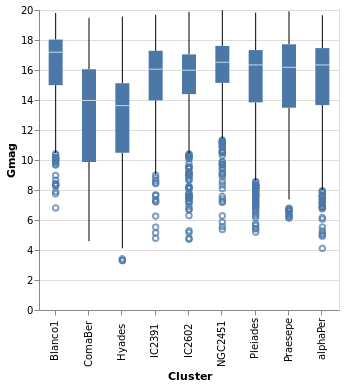

gmagHistogram :: VegaLite Source #

A different column (or field) of the input data can be viewed, just by changing the name in the specification:

Open this visualization in the Vega Editor

gmagHistogram = simpleHistogram "Gmag"

Here we can see that the number of stars with a given magnitude rises up until a value of around 18, and then drops off.

Changing the scale of an axis

In the case of parallaxHistogram, the data is dominated by

stars with small parallaxes. Changing the scale of the

Y axis to use a logarithmic, rather than linear, scale might

provide more information:

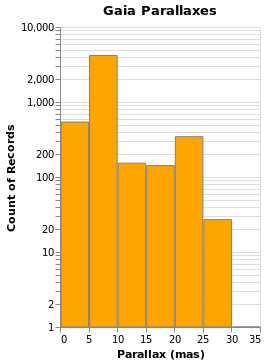

ylogHistogram :: VegaLite Source #

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "plx", PmType Quantitative, PBin [], PAxis [ AxTitle "Parallax (mas)" ] ]

. position Y [ PAggregate Count, PmType Quantitative, PScale [ SType ScLog ] ]

in toVegaLite

[ gaiaData

, mark Bar [ MFill "orange", MStroke "gray" ]

, enc []

, height 300

, title "Gaia Parallaxes" []

]

There are four new changes to the visualization created by simpleHistogram (since PAxis

has been used above):

- an explicit choice of scaling for the Y channel (using

PScale); - the fill (

MFill) and edge (MStroke) colors of the histogram bars are different; - the height of the overall visualization has been increased;

- and a title has been added.

If you view this in the Vega Editor you will see the following warning:

A log scale is used to encode bar's y. This can be misleading as the height of the bar can be arbitrary based on the scale domain. You may want to use point mark instead.

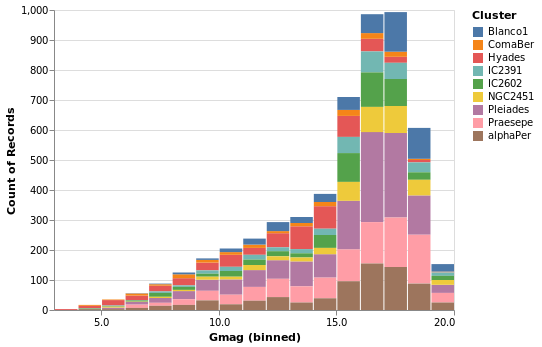

Stacked Histogram

gmagHistogramWithColor :: VegaLite Source #

A color encoding can also be added. When used with the Tick mark -

stripPlotWithColor - the result was that each tick mark was colored

by the "Cluster" field, but for the Bar mark the result is that

the bars are stacked together. I have also taken the opportunity to

widen the plot (width); define the binning scheme used, with Step

1AxValues.

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative, binning, axis ]

. position Y [ PAggregate Count, PmType Quantitative ]

. color [ MName "Cluster", MmType Nominal ]

binning = PBin [ Step 1 ]

axis = PAxis [ AxValues (Numbers [ 0, 5 .. 20 ]) ]

in toVegaLite

[ gaiaData

, mark Bar []

, enc []

, height 300

, width 400

]

Note that hvega will allow you to combine a color encoding with a ScLog

scale, even though a Vega-Lite viewer will not display the

resulting Vega-Lite specification, saying

Cannot stack non-linear scale (log)

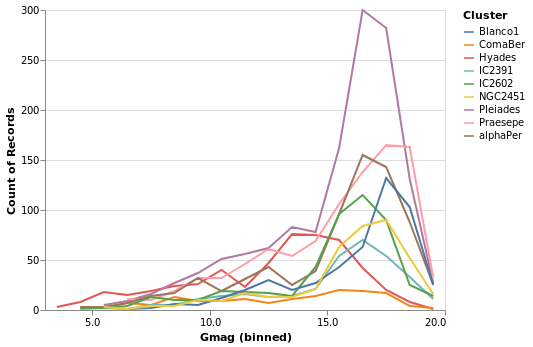

gmagLineWithColor :: VegaLite Source #

Notice how we never needed to state explicitly that we wished our bars

to be stacked. This was reasoned directly by Vega-Lite based on the

combination of bar marks and color channel encoding. If we were to

change just the mark function from Bar to Line, Vega-Lite produces an

unstacked series of lines, which makes sense because unlike bars,

lines do not occlude one another to the same extent.

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative, binning, axis ]

. position Y [ PAggregate Count, PmType Quantitative ]

. color [ MName "Cluster", MmType Nominal ]

binning = PBin [ Step 1 ]

axis = PAxis [ AxValues (Numbers [ 0, 5 .. 20 ]) ]

in toVegaLite

[ gaiaData

, mark Line []

, enc []

, height 300

, width 400

]

You don't have to just count

The previous histogram visualizations have taken advantage of Vega-Lite's

ability to bin up (Count) a field, but there are a number of aggregation

properties (as defined by the Operation type). For example, there

are a number of measures of the "spread" of a population, such as

the sample standard deviation (Stdev).

You can also synthesize new data based on existing data, with the

transform operation. Unlike the encoding function, the order

of the arguments to transform do matter, as they control the

data flow (e.g. you can not filter a data set if you have not

created the field to be filtered).

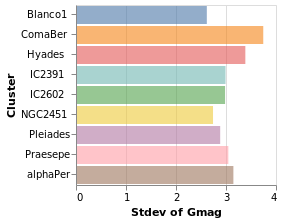

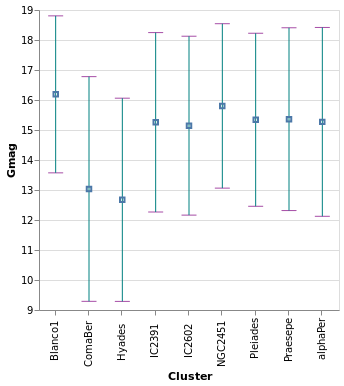

yHistogram :: VegaLite Source #

The aim for this visualization is to show the spread in the Gmag field

for each cluster, so we now swap the axis on which the aggregate is

being applied (so that the cluster names appear on the y axis),

and hide the legend that is applied (using MLegend []

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative, PAggregate Stdev ]

. position Y [ PName "Cluster", PmType Nominal ]

. color [ MName "Cluster", MmType Nominal, MLegend [] ]

in toVegaLite

[ gaiaData

, mark Bar [ MOpacity 0.6 ]

, enc []

]

The bar opacity is reduced slightly with 'MOpacity 0.6' so that the

x-axis grid lines are visible. An alternative would be to change the

AxZIndex value for the X encoding so that it is drawn on top of

the bars.

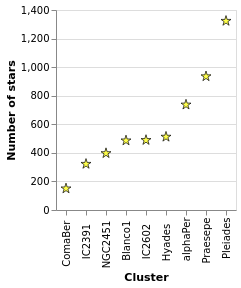

starCount :: VegaLite Source #

Aggregation can happen in the position channel - as we've seen with

the PAggregate option - or as a transform, where we create

new data to replace or augment the existing data. In the following

example I use the aggregate transform to calculate the number of

rows in the original dataset per cluster with the

Count operation. This effectively replaces

the data, and creates a new one with the fields "Cluster" and

"count".

The other two major new items in this visualization are that the

X axis has been ordered to match the Y axis (using ByChannel and

PSort in the position encoding), and I have specified my own SVG

definition for the symbols with SymPath and MShape.

Open this visualization in the Vega Editor

let trans =transform.aggregate[opAsCount"" "count" ] [ "Cluster" ] enc = encoding . position X [ PName "Cluster" , PmType Nominal ,PSort[ByChannelChY] ] . position Y [ PName "count" , PmType Quantitative , PAxis [ AxTitle "Number of stars" ] ] star =SymPath"M 0,-1 L 0.23,-0.23 L 1,-0.23 L 0.38,0.21 L 0.62,0.94 L 0,0.49 L -0.62,0.94 L -0.38,0.21 L -1,-0.23 L -0.23,-0.23 L 0,-1 z" in toVegaLite [ gaiaData , trans [] , enc [] , markPoint[MShapestar , MStroke "black" ,MStrokeWidth1 , MFill "yellow" ,MSize100 ] ]

Notes:

- the star design is based on a Wikipedia design, after some hacking and downsizing (such as losing the cute eyes);

- when using

CountwithopAs, the firstFieldNameargument is ignored, so I set it to the empty string""(it's be great if the API were such we didn't have to write dummy arguments, but at presenthvegadoesn't provide this level of safety); - although the order of operations of

transformis important, here I only have one (theaggregatecall); - and the order of the arguments to

toVegaLitedoes not matter (so you can have thetransformappear beforeencodingor after it).

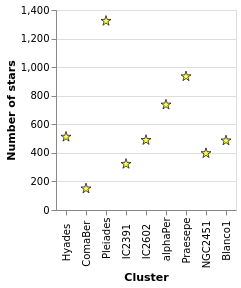

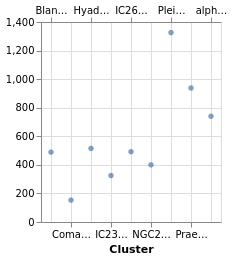

starCount2 :: VegaLite Source #

I've shown that the number of stars per cluster increases when ordered by increasing count of the number of stars per cluster, which is perhaps not the most informative visualization. How about if I ask if there's a correlation between number of stars and distance to the cluster (under the assumption that objects further away can be harder to detect, so there might be some form of correlation)?

To do this, I tweak starCount so that we also calculate the

parallax to each cluster in the transform - in this case taking

the median value of the distribution thanks to the Median operation - and

then using this new field to order the X axis with ByFieldOp. Since

parallax is inversely correlated with distance we use the

Descending option to ensure the clusters are drawn from near to

far. We can see that there is no obvious relation with distance.

Open this visualization in the Vega Editor

let trans = transform

. aggregate [ opAs Count "" "count"

, opAs Median "plx" "plx_med"

]

[ "Cluster" ]

enc = encoding

. position X [ PName "Cluster"

, PSort [ ByFieldOp "plx_med" Max

, Descending

]

]

. position Y [ PName "count"

, PmType Quantitative

, PAxis [ AxTitle "Number of stars" ]

]

star = SymPath "M 0,-1 L 0.23,-0.23 L 1,-0.23 L 0.38,0.21 L 0.62,0.94 L 0,0.49 L -0.62,0.94 L -0.38,0.21 L -1,-0.23 L -0.23,-0.23 L 0,-1 z"

in toVegaLite [ gaiaData

, trans []

, enc []

, mark Point [ MShape star

, MStroke "black"

, MStrokeWidth 1

, MFill "yellow"

, MSize 100

]

]

Notes:

- I find the "Data Viewer" section of the Vega Editor rather useful when creating new data columns or structures, as you can actually see what has been created (I find Firefox works much better than Chrome here);

- the use of

ByFieldOphere is a bit un-settling, as you need to give it an aggregation-style operation to apply to the data field, but in this case we have already done this withopAs(so I pickMaxas we just need something that copies the value over).

We revisit this data in layeredCount.

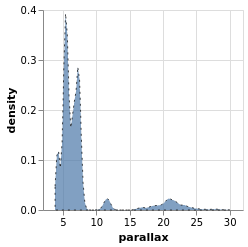

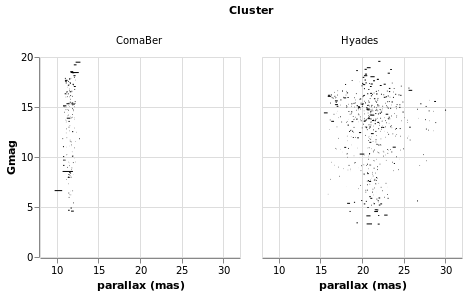

densityParallax :: VegaLite Source #

Vega-Lite supports a number of data transformations, including

several "pre-canned" transformations, such as a

kernel-density estimator, which I will use here to

look for structure in the parallax distribution. The earlier

use of a fixed-bin histogram - parallaxHistogram and ylogHistogram -

showed a peak around 5 to 10 milli-arcseconds, and a secondary

one around 20 to 25 milli-arcseconds, but can we infer anything more

from the data?

I have already shown that the transform

function works in a similar manner to encoding, in that

it is applied to one or more transformations. In this

example I use the density transform - which is new to Vega Lite 4 -

to "smooth" the data without having to pre-judge the data

(although there are options to configure the density estimation).

The transform creates new fields - called "value" and "density"

by default - which can then be displayed as any other field. In this

case I switch from Bar or Line to use the Area encoding, which

fills in the area from the value down to the axis.

Open this visualization in the Vega Editor

let trans = transform

. density "plx" []

enc = encoding

. position X [ PName "value"

, PmType Quantitative

, PAxis [ AxTitle "parallax" ]

]

. position Y [ PName "density", PmType Quantitative ]

in toVegaLite

[ gaiaData

, mark Area [ MOpacity 0.7

, MStroke "black"

, MStrokeDash [ 2, 4 ]

, ]

, trans []

, enc []

]

The parallax distribution shows multiple peaks within the 5 to 10 milli-arcsecond range, and separate peaks at 12 and 22 milli-arcseconds.

The properties of the area mark are set here to add a black,

dashed line around the edge of the area. The DashStyle configures

the pattern by giving the lengths, in pixels,

of the "on" and "off" segments, so here the gaps are twice the

length of the line segments. This was done more to show that it

can be done, rather than because it aids this particular visualization!

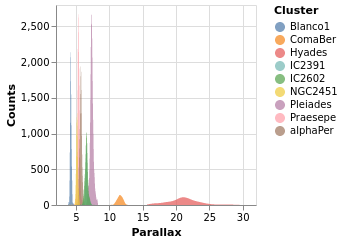

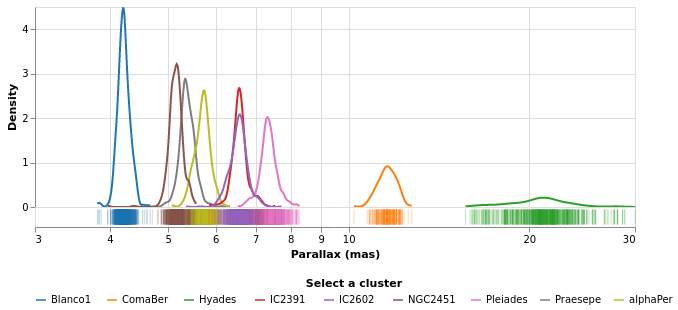

densityParallaxGrouped :: VegaLite Source #

The density estimation can be configured using DensityProperty.

Here we explicitly label the new fields to create (rather than

use the defaults), and ensure the calculation is done per cluster.

This means that the data range for each cluster is used to

perform the KDE, which in this case is useful (as it ensures the

highest fidelity), but there are times when you may wish to ensure

a consistent scale for the evaluation (in which case you'd use

the DnExtent option, as well as possibly DnSteps, to define

the grid). The final change is to switch from density estimation

to counts for the dependent axis.

Open this visualization in the Vega Editor

let trans = transform

. density "plx" [ DnAs "xkde" "ykde"

, DnGroupBy [ "Cluster" ]

, DnCounts True

]

enc = encoding

. position X [ PName "xkde"

, PmType Quantitative

, PAxis [ AxTitle "Parallax" ]

]

. position Y [ PName "ykde"

, PmType Quantitative

, PAxis [ AxTitle "Counts" ]

]

. color [ MName "Cluster"

, MmType Nominal

]

in toVegaLite

[ gaiaData

, mark Area [ MOpacity 0.7 ]

, trans []

, enc []

]

Note how the clusters separate out in pretty cleanly, but - as

also shown in the pointPlot visualization below - it is pretty

busy around 7 milli arcseconds.

The counts here (the Y axis) are significantly larger than

seen than the actual count of stars, shown in starCount. It

appears that the DnCounts TruecompareCounts plot below.

Plotting with points

At this point we make a signifiant detour from the Elm Vega-Lite

walkthtough, and look a bit more at the Point mark, rather than creating

small-multiple plots. Don't worry, we'll get to them later.

I apologize for the alliterative use of point here.

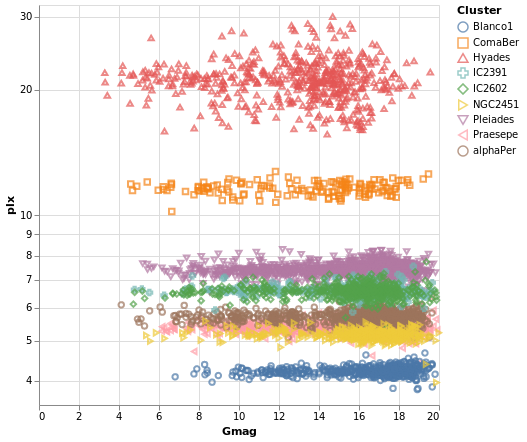

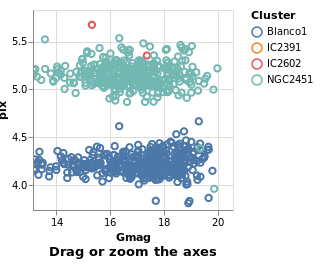

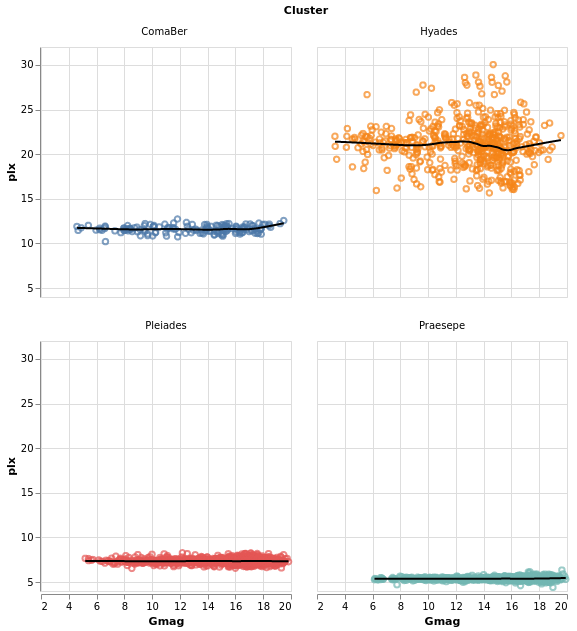

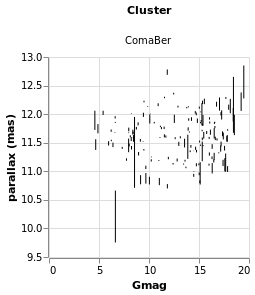

pointPlot :: VegaLite Source #

Here I use the Point mark to display the individual

Gmag, plx pairs, encoding by both color and 'shape.

Since the encoding uses the same field of the data (the Cluster

name), Vega-Lite is smart enough to only display one legend,

which contains the point shape and color used for each cluster.

Since the parallax values are bunched together at low values,

a logarithmic scale (ScLog) is used for the y axis, along with

commands to define the actual axis domain - by turning off the

IsNice support and listing the minimum and maximum values

for the axis with SDomain.

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative ]

. position Y [ PName "plx", PmType Quantitative, PScale scaleOpts ]

. color cluster

. shape cluster

scaleOpts = [ SType ScLog, SDomain (DNumbers [3.5, 32]), SNice (IsNice False) ]

cluster = [ MName "Cluster", MmType Nominal ]

,

in toVegaLite [ gaiaData

, mark Point []

, enc []

, width 400

, height 400

]

We can see that each cluster appears to have a separate parallax

value (something we have seen in earlier plots, such as parallaxBreakdown),

and that it doesn't really vary with Gmag. What this is telling

us is that for these star clusters, the distance to each member star

is similar, and that they are generally at different distances

from us. However, it's a bit hard to tell exactly what is going

on around 5 to 6 milli arcseconds, as the clusters overlap here.

This line of thinking leads us nicely to map making, but before we

try some cartography, I wanted to briefly provide some context for

these plots. The

parallax of a star

is a measure of its distance from us, but it is an inverse relationship,

so that nearer stars have a larger parallax than those further from us.

The Gmag column measures the apparent brightness of the star, with the

G part indicating what

part of the spectrum

is used (for Gaia, the G band is pretty broad, covering much of

the visible spectrum), and the mag part is because optical Astronomy

tends to use

- the logarithm of the measured flux

- and then subtract this from a constant

so that larger values mean fainter sources. These are also apparent magnitues, so that they measure the flux of the star as measured at Earth, rather than its intrinsic luminosity (often defined as an object's absolute magnitude).

We can see that the further the cluster is from us - that is, as we move down this graph to smaller parallax values - then the smallest stellar magnitude we can see in a cluster tends to increase, but that there are stars up to the maximum value (20) in each cluster. This information can be used to look at the distribution of absolute magnitudes of stars in a cluster, which tells us about its evolutionary state - such as is it newly formed or old - amongst other things. However, this is straying far from the aim of this tutorial, so lets get back to plotting things.

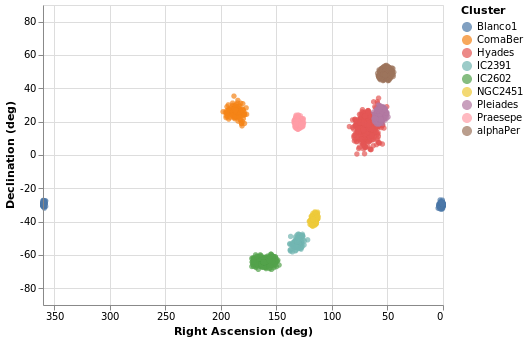

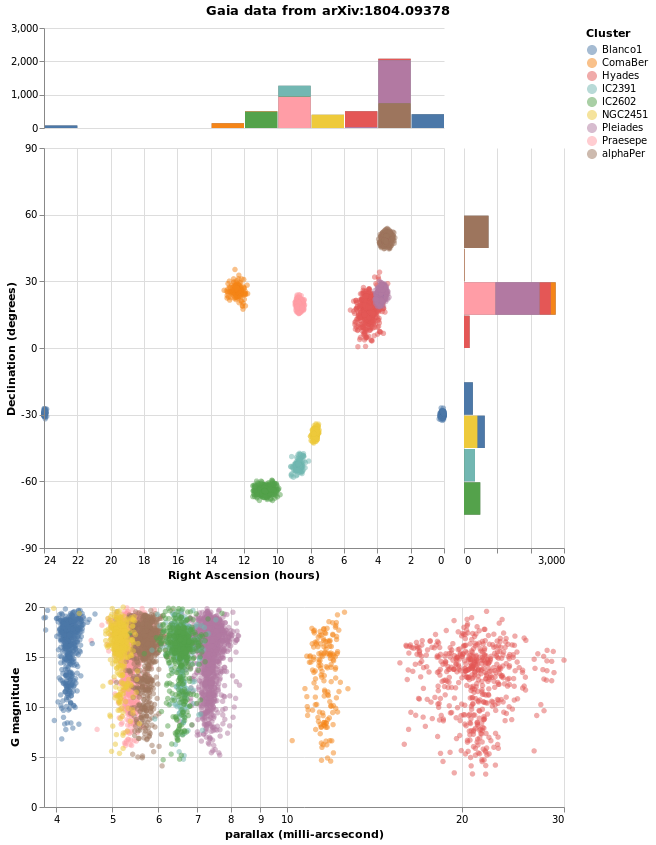

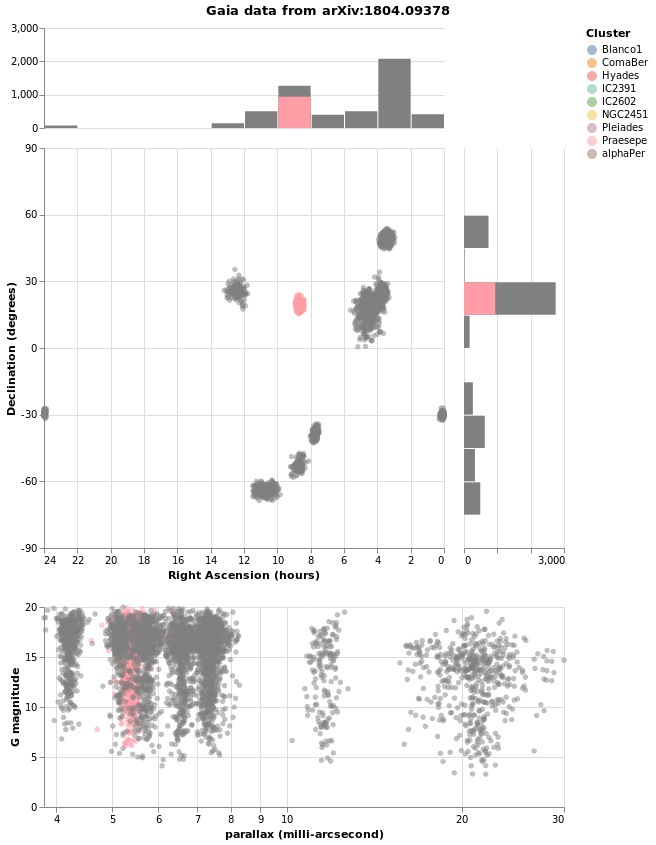

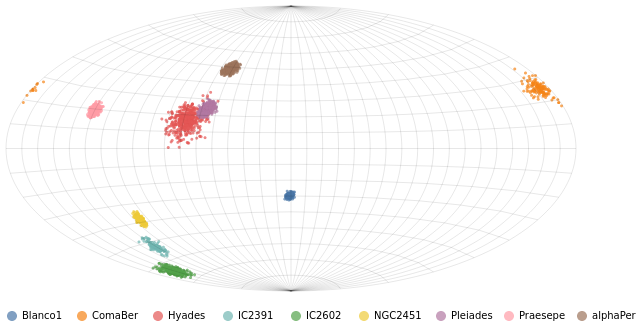

Making a map

We have some hint that the different clusters are distinct objects

in space, in that they appear to be different distances from us,

but where does the "cluster" in the name "Stellar Cluster"

come from? Well, we can try plotting up the position of each star

on the sky - using the RA_ICRS and DE_ICRS fields - to find out.

The following specification should only contain one new feature - other

than sneakily switching from Point to Circle type for the mark - and

that is displaying the x axis (namely Right Ascension) in reverse (using

PSort [ Descending ]clusterCenters).

Open this visualization in the Vega Editor

let enc = encoding

. position X (axOpts "RA_ICRS" "Right Ascension (deg)" ++ [ raScale, PSort [ Descending ] ])

. position Y (axOpts "DE_ICRS" "Declination (deg)" ++ [ decScale ])

. color [ MName "Cluster", MmType Nominal ]

axOpts field lbl = [ PName field, PmType Quantitative, PAxis [ AxTitle lbl ]]

scaleOpts minVal maxVal = [ SDomain (DNumbers [ minVal, maxVal ]), SNice (IsNice False) ]

raScale = PScale (scaleOpts 0 360)

decScale = PScale (scaleOpts (-90) 90)

in toVegaLite [ gaiaData

, mark Circle []

, enc []

, width 400

, height 300

]

We can see that these clusters are indeed localised on the sky, with Hyades looking like it covers the largest area. However, we should be careful and not forget either Grover's hard work or Father Ted's explanation to Father Dougal, since these clusters are different distances from us, which makes size a tricky thing to measure from this plot.

There is also the fact that I have used possibly the worst way of displaying the Right Ascension and Declination data. Although the night sky is not the same as the Earth's surface, the issues when trying to display the Globe on a flat surface also apply to displaying up the sky. For this plot the distortions near the pole are huge, although fortunately we don't have any clusters too close to either pole.

Using a projection

Vega-Lite supports a large number of projections - via the

Projection type - which we use below to create

a similar visualization to posPlot. Here I use the

Longitude and Latitude channels, along with a

Mercator projection, to display the data.

The trick in this case is that longitude runs from -180 to 180

degrees, but the data has Right Ascension going from 0

to 360 degrees. Here we take advantage of Vega Lite's

data transformation capabilities and create a new

column - which I call longitude - and is defined as

"Right Ascension - 360" when the Right Ascension is

greater than 180, otherwise it is just set to the

Right Ascension value. The "expression" support

is essentially a sub-set of Javascript, and the datum

object refers to the current row. The new data column

can then be used with the Longitude channel.

Thankfully the Latitude channel can use the Declination values

without any conversion.

As can be seen, this flips the orientation compared to

posPlot, and makes the center of the plot have a

longiture (or Right Ascension), of 0 degrees.

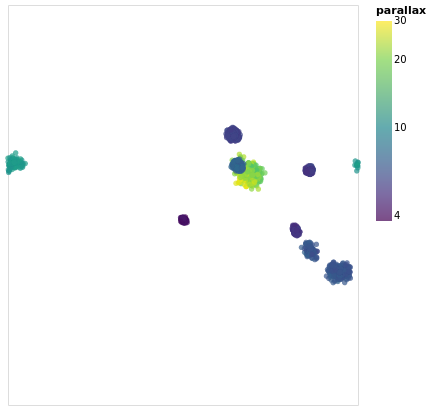

Open this visualization in the Vega Editor

let trans = transform

. calculateAs

"datum.RA_ICRS > 180 ? datum.RA_ICRS - 360 : datum.RA_ICRS"

"longitude"

axOpts field = [ PName field, PmType Quantitative ]

enc = encoding

. position Longitude (axOpts "longitude")

. position Latitude (axOpts "DE_ICRS")

. color [ MName "plx"

, MmType Quantitative

, MScale [ SType ScLog

, SScheme "viridis" []

]

, MLegend [ LTitle "parallax" ]

]

. tooltip [ TName "Cluster", TmType Nominal ]

in toVegaLite [ width 400

, height 350

, projection [ PrType Mercator ]

, gaiaData

, trans []

, enc []

, mark Circle []

]

The other major change made to posPlot is that the stars are now

color-encoded by the log of their parallax value

rather than cluster membership,

and the color scheme has been changed to use the "viridis" color

scale.

The LTitle option is set for the legend (on the

color channel) rather than use the default (which in

this case would be "plx").

Since parallax is a numeric value, with ordering (i.e. Quantitative),

the legend has changed from a list of symbols to a gradient bar.

To account for this lost of information, I have added a tooltip

encoding so that when the pointer is moved over a star its cluster

name will be displayed. This is, unfortunately,

only visible in the interactive version of the visualization.

Note that the tooltip behavior changed in Vega Lite 4 (or in the

code used to display the visualizations around this time), since

prior to this tooltips were on by default. Now tooltips have to be

explicitly enabled (with tooltip or tooltips).

From this visualization we can see that the apparent size of the cluster

(if we approximate each cluster as a circle, then we can think of the radius

of the circle as a measure of size) depends on parallax, with larger

sizes having larger parallaxes. This is because the distance to a star

is inversely-dependent on its parallax, so larger parallaxes mean the

star is closer to us. However, there is no reason that the intrinsic

size - that is its actual radius - of each cluster is the same.

We can see that although the Hyades and Pleiades clusters overlap

on the sky, they have significantly-different parallaxes (as can

be seen in pointPlot for example), with Hyades being the closer

of the two.

It is possible to add graticules - with the aptly-named

graticule function - but this requires the use of layers,

which we haven't covered yet. If you are impatient you can jump

right to skyPlotWithGraticules!

If you want to see how to "create your own projection", see

skyPlotAitoff, which uses the

Aitoff projection

(which is unfortunately not available to

Vega-Lite directly).

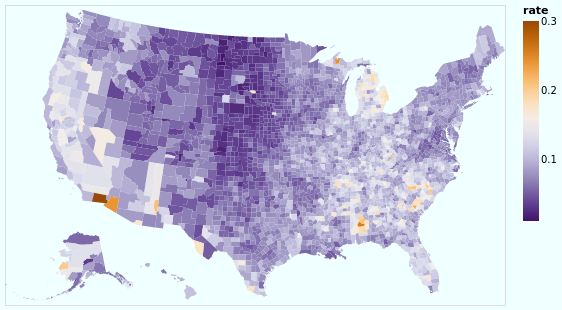

Choropleth with joined data

There are some things vega-lite can do, don't fit as well into the flow of looking at astronomy data! But having examples is helpful. So we bring our eyes back to earth, and demonstrate a basic "choropleth", a map - in the sense of pictures of bounded geographical regions - with data for each location indicated by color.

Don't worry, we'll soon be back staring at the stars!

The choropleth examples (there's another one later on) use a map of the United States as the data source, which we abstract out into a helper function:

usGeoData :: T.Text -> Data

usGeoData f = dataFromUrl "https://raw.githubusercontent.com/vega/vega/master/docs/data/us-10m.json" [TopojsonFeature f]

The argument gives the "topological" feature in the input file to

display (via TopojsonFeature). You can read more information on this

in the Vega-Lite documentation.

This section was contributed by Adam Conner-Sax. Thanks!

choroplethLookupToGeo :: VegaLite Source #

Our first choropleth is based on the Choropleth example from the Vega-Lite Example Gallery.

The key elements are:

- Using the

TopojsonFeaturefeature for the data source (thanks tousGeoData). - Choosing the correct "feature" name in the geographic data, here

"counties"in the argument to ourusGeoDatahelper function. - Performing a Vega-Lite lookup to join the data to be plotted (the unemployment rate)

to the geographic data. In this case, the column name in the unemployment data -

"id"given as the first argument tolookup- is the same as the column name in the geographic data, the third argument tolookup. Those can be different. - Specifying a projection, that is a mapping from (longitude, latitude) to (x,y)

coordinates. Since we are looking at data for the main-land United States of

America we use

AlbersUsa(rather than looking at the whole globe, as we did in earlier visualizations), which lets us view the continental USA as well as Alaska and Hawaii. - Using the

Geoshapemark.

Open this visualization in the Vega Editor

let unemploymentData = dataFromUrl "https://raw.githubusercontent.com/vega/vega/master/docs/data/unemployment.tsv" []

in toVegaLite

[ usGeoData "counties"

, transform

. lookup "id" unemploymentData "id" (LuFields ["rate"])

$ []

, projection [PrType AlbersUsa]

, encoding

. color [ MName "rate", MmType Quantitative, MScale [ SScheme "purpleorange" [] ] ]

$ []

, mark Geoshape []

, width 500

, height 300

, background "azure"

]

So, we have seen how to join data between two datasets - thanks to

lookup - and display the unemployment rate (from one data source)

on a map (defined from another data source).

I have chosen a

diverging color scheme

for the rate, mainly just because I can, but also because I wanted to see how

the areas with high rates were clustered. I've also shown how the background

function can be used (it is simpler than the configuration approach

used earlier in stripPlotWithBackground).

Our next choropleth - choroplethLookupFromGeo - will show how we can join

multiple fields across data sources, but this requires understanding how

Vega-Lite handles multiple views, which is fortunately next in our

tutorial.

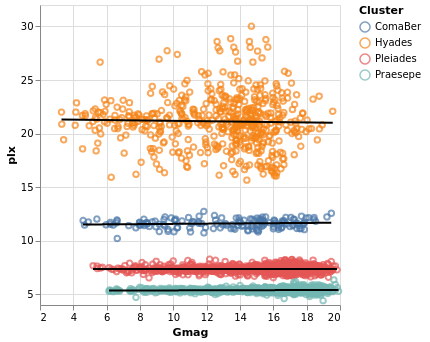

Layered and Multi-View Compositions

The Stacked-Histogram plot - created by gmagHistogramWithColor - showed

the distribution of the "Gmag" field by cluster, but it was hard to

compare them. A common approach in this situation is to split up

the data into multiple plots -

the small multiple

approach (also known as trellis plots) - which we can easily achieve in

Vega Lite. It also gets us back on track with the Elm walkthrough.

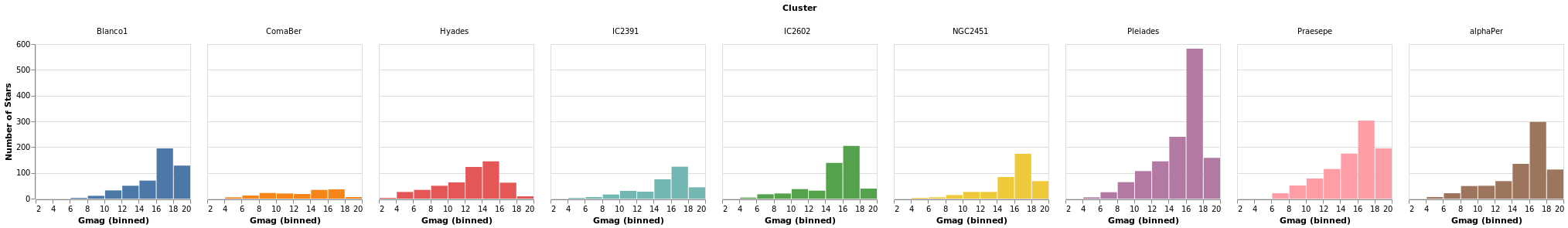

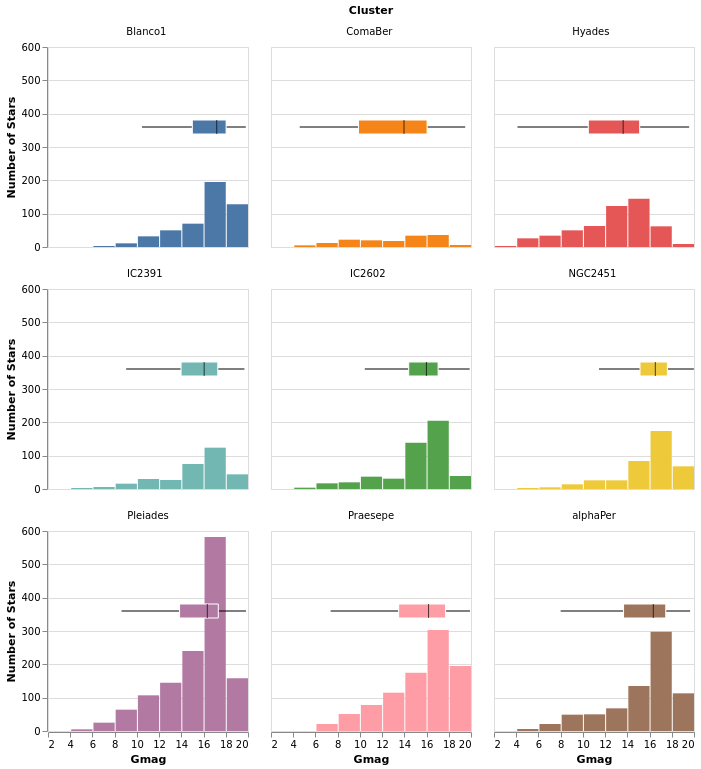

smallMultiples :: VegaLite Source #

Our first attempt is with the column function, which tells

Vega-Lite to create a plot for each Cluster field (and introduces

us to the F family of FacetChannel constructors).

The legend has been turned off with MLegend []

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative, PBin [] ]

. position Y yAxis

. color [ MName "Cluster", MmType Nominal, MLegend [] ]

. column [ FName "Cluster", FmType Nominal ]

yAxis = [ PAggregate Count

, PmType Quantitative

, PAxis [ AxTitle "Number of Stars" ]

]

in toVegaLite

[ gaiaData

, mark Bar []

, enc []

]

Since we have nine clusters in the sample, the overall visualization is too wide, unless you have a very-large monitor. Can we do better?

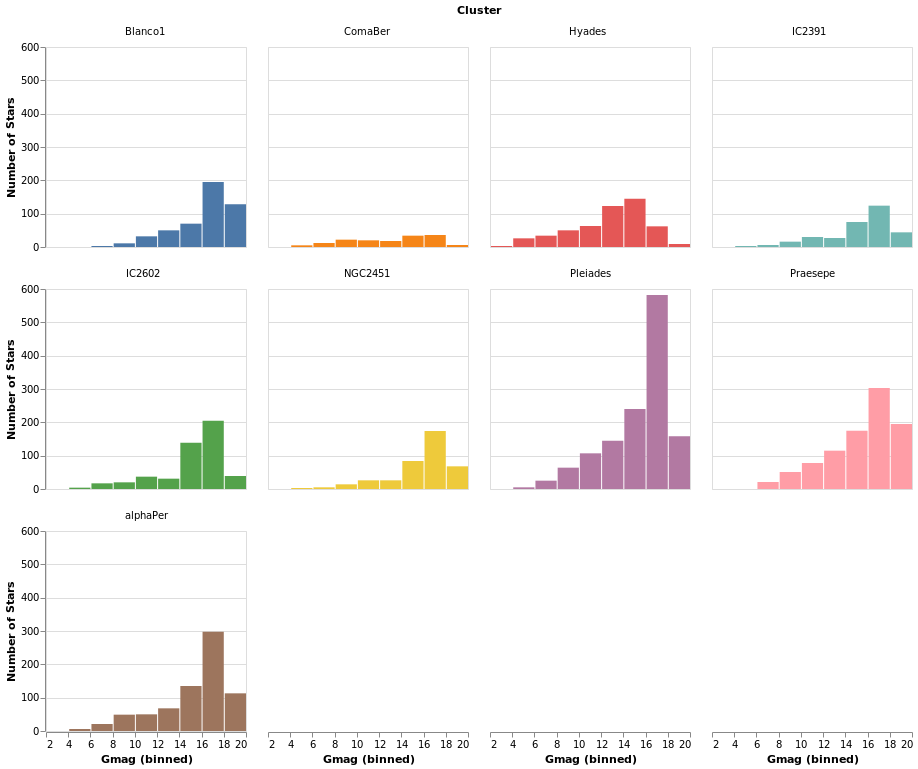

smallMultiples2 :: VegaLite Source #

The number of columns used in small-multiple can be defined using the

columns function. However, this requires us to:

- move the facet definition out from the encoding and into the top-level,

with the

facetFlowfunction; - and define the plot as a separate specification, and apply it

with

specificationandasSpec.

The actual syntactic changes to smallMultiples are actually

fairly minor:

let enc = encoding

. position X [ PName "Gmag", PmType Quantitative, PBin [] ]

. position Y yAxis

. color [ MName "Cluster", MmType Nominal, MLegend [] ]

yAxis = [ PAggregate Count

, PmType Quantitative

, PAxis [ AxTitle "Number of Stars" ]

]

in toVegaLite

[ gaiaData

, columns 4

, facetFlow [ FName "Cluster", FmType Nominal ]

, specification (asSpec [ mark Bar [], enc [] ])

]

Open this visualization in the Vega Editor

Note that Vega Lite does support a "facet" field in its encodings,

but hvega follows Elm VegaLite and requires you to use this

wrapped facet approach.

I chose 4 columns rather than 3 here to show how "empty" plots

are encoded. You can see how a 3-column version looks in the

next plot, densityMultiples.

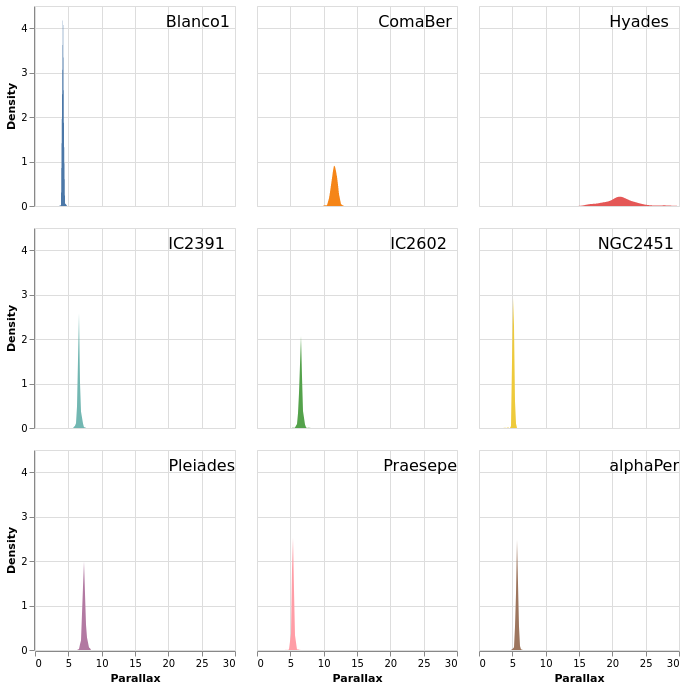

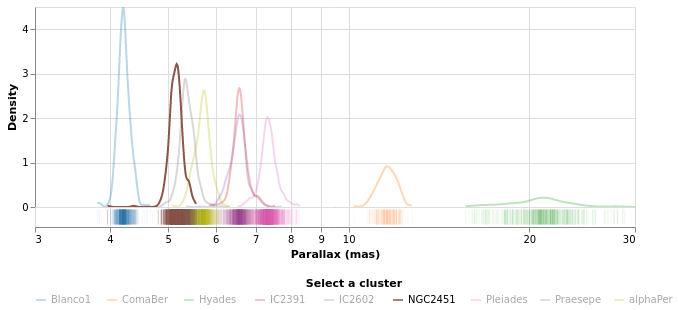

densityMultiples :: VegaLite Source #

Earlier - in densityParallaxGrouped - I used the Kernel-Density

Estimation support in Vega Lite 4 to show smoothed parallax

distributions, grouped by cluster. We can combine this with

the facetFlow approach to generate a plot per cluster

of the parallax distribution. I have used DnExtent to ensure

that the density estimation is done on the same grid for

each cluster.

The most important thing in this example is that I have

used a sensible number of columns (ending up in a three by three grid)!

The other significant changes to smallMultiples2 is that I have

used the FHeader option to control how the facet headers

are displayed: the title (which in this case was "Cluster")

has been hidden, and the per-plot labels made larger, but moved

down so that they lie within each plot. I am not 100% convinced

this is an intended use of HLabelPadding, but it seems to work!

Open this visualization in the Vega Editor

let trans = transform

. density "plx" [ DnAs "xkde" "ykde"

, DnGroupBy [ "Cluster" ]

, DnExtent 0 30

]

enc = encoding

. position X [ PName "xkde"

, PmType Quantitative

, PAxis [ AxTitle "Parallax" ]

]

. position Y [ PName "ykde"

, PmType Quantitative

, PAxis [ AxTitle "Density" ]

]

. color [ MName "Cluster"

, MmType Nominal

, MLegend []

]

headerOpts = [ HLabelFontSize 16

, HLabelAlign AlignRight

, HLabelAnchor AEnd

, HLabelPadding (-24)

, HNoTitle

]

spec = asSpec [ enc []

, trans []

, mark Area [ ]

]

in toVegaLite

[ gaiaData

, columns 3

, facetFlow [ FName "Cluster"

, FmType Nominal

, FHeader headerOpts

]

, specification spec

]

One plot, two plot, red plot, blue plot

There are four ways in which multiple views may be combined:

- The facet operator takes subsets of a dataset (facets) and

separately applies the same view specification to each of

those facets (as seen with the

columnfunction above). Available functions to create faceted views:column,row,facet,facetFlow, andspecification. - The layer operator creates different views of the data but

each is layered (superposed) on the same same space; for example

a trend line layered on top of a scatterplot.

Available functions to create a layered view:

layerandasSpec. - The concatenation operator allows arbitrary views (potentially

with different datasets) to be assembled in rows or columns.

This allows 'dashboards' to be built.

Available functions to create concatenated views:

vConcat,hConcat, andasSpec. - The repeat operator is a concise way of combining multiple views

with only small data-driven differences in each view.

Available functions for repeated views:

repeatandspecification.

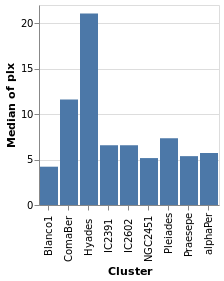

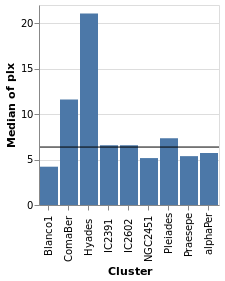

We start with a "basic" plot for the dataset: the median value of the parallax of the stars in each cluster.

Open this visualization in the Vega Editor

let plx = position Y [ PName "plx", PmType Quantitative, PAggregate Median ]

cluster = position X [ PName "Cluster", PmType Nominal ]

enc = encoding . cluster . plx

in toVegaLite

[ gaiaData

, mark Bar []

, enc []

]

Composing layers

layeredPlot :: VegaLite Source #

We start our exploration by combining two visualizations, layering

one on top of the other. The base plot shows the same data as

basePlot, and then on top we will show a horizontal line that

indicates the median parallax for all the stars in the sample.

Open this visualization in the Vega Editor

let plx = position Y [ PName "plx", PmType Quantitative, PAggregate Median ]

cluster = position X [ PName "Cluster", PmType Nominal ]

perCluster = [ mark Bar [], encoding (cluster []) ]

allClusters = [ mark Rule [] ]

in toVegaLite

[ gaiaData

, encoding (plx [])

, layer (map asSpec [perCluster, allClusters])

]

For this visualization, the specification starts with the data

source and an encoding, but only for the y axis (which means

that all layered plots use the same encoding for the axis). The

layer function introduces the different visualizations that

will be combined, each as there own "specification" (hence

the need to apply asSpec to both perCluster and allClusters).

Note that there is no x-axis encoding for the Rule, since the

data applies to all clusters (i.e. it should span the

whole visualization).

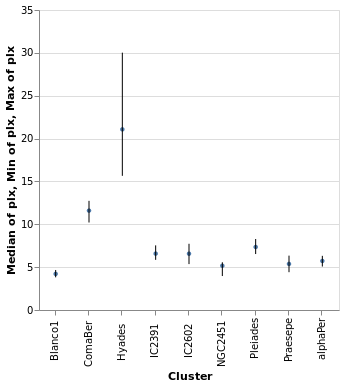

layeredDiversion :: VegaLite Source #

This example is similar to layeredPlot but includes an x-axis

encoding for the second layer. We use this to show the range of the

data - so the minimum to maximum parallax range of each cluster - with

the Rule type. The difference to the previous plot is that an

extra positional encoding is added (Y2) to define the end point

of each line (Y is used as the start point).

Open this visualization in the Vega Editor

let plx op = position Y [ PName "plx", PmType Quantitative, PAggregate op ]

cluster = position X [ PName "Cluster", PmType Nominal ]

median = [ mark Circle [ MSize 20 ]

, encoding (plx Median [])

]

range = [ mark Rule [ ]

, encoding

. plx Min

. position Y2 [ PName "plx", PAggregate Max ]

$ []

]

in toVegaLite

[ gaiaData

, encoding (cluster [])

, layer (map asSpec [ median, range ])

, width 300

, height 300

]

The MSize option is used to change the size of the circles so that they

do not drown out the lines (the size value indicates the area of the mark,

and so for circles the radius is proportional to the square root of this

size value; in practical terms I adjusted the value until I got something

that looked sensible).

Note that the y axis is automatically labelled with the different operation types that were applied - median, minimum, and maximum - although there is no indication of what marks map to these operations.

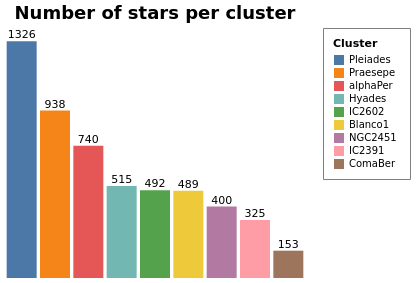

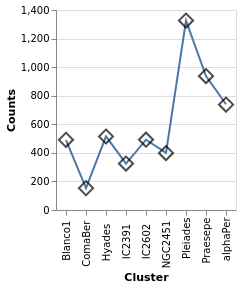

layeredCount :: VegaLite Source #

In this example (adapted from an example provided by Jo Wood)

I display the same data as in starCount, but

as two layers: the first is a histogram (using the Bar mark),

and the second displays the count value as a label with the

Text mark.

Open this visualization in the Vega Editor

let trans = transform

. aggregate [ opAs Count "" "count" ]

[ "Cluster" ]

chanSort = [ ByChannel ChY, Descending ]

baseEnc = encoding

. position X [ PName "Cluster"

, PmType Nominal

, PSort chanSort

, PAxis []

]

. position Y [ PName "count"

, PmType Quantitative

, PAxis []

]

barEnc = baseEnc

. color [ MName "Cluster"

, MmType Nominal

, MLegend [ LStrokeColor "gray"

, LPadding 10

]

, MSort chanSort

]

labelEnc = baseEnc

. text [ TName "count", TmType Quantitative ]

barSpec = asSpec [ barEnc [], mark Bar [] ]

labelSpec = asSpec [ labelEnc [], mark Text [ MdY (-6) ] ]

cfg = configure

. configuration (ViewStyle [ViewNoStroke])

in toVegaLite [ width 300

, height 250

, cfg []

, gaiaData

, title "Number of stars per cluster" [ TFontSize 18 ]

, trans []

, layer [ barSpec, labelSpec ]

]

Both axes have been dropped from this visualization since

the cluster name can be found from the legend and the

count is included in the plot. The same sort order is

used for the X axis and the color mapping, so that its

easy to compare (the first item in the legend is the

cluster with the most counts). Note that this changes the

color mapping (cluster to color) compared to previous

plots such as parallaxBreakdown.

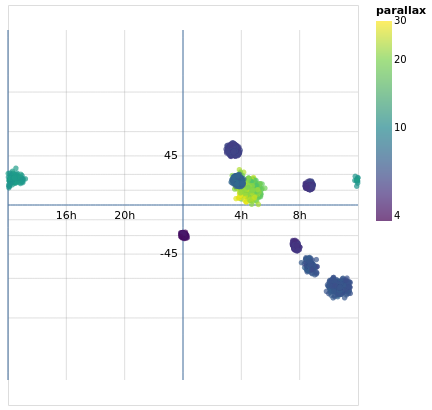

skyPlotWithGraticules :: VegaLite Source #

As promised earlier (in skyPlot), now that we have layers, we can

add graticules to a projection. In this case I create two graticule layers,

the "base" layer (grats), which creates the grey lines that cover

the map - using a spacing of 60 degrees (4 hours) for longitude and

15 degrees for latitude - and then an extra layer (grats0), which shows blue lines

at longitude seprations of 180 degrees

and latitude spacings of 90 degrees. In this case the central horizontal and

vertical lines represent 0 degrees, and the one at the left shows

-180 degrees. There are no latitude lines for -90 or +90 since the

default is to stop at ±85 degrees (see GrExtent for a way to

change this).

I added the second graticule layer to see if I could get by without

labels for the grid lines, but decided this did not work out too well,

so ended with two layers, one each for the Right Ascension and

Declination values, using dataFromColumns to manually create the

label positions and label content to display with the Text mark.

Open this visualization in the Vega Editor

let trans = transform

. calculateAs

"datum.RA_ICRS > 180 ? datum.RA_ICRS - 360 : datum.RA_ICRS"

"longitude"

axOpts field = [ PName field, PmType Quantitative ]

enc = encoding

. position Longitude (axOpts "longitude")

. position Latitude (axOpts "DE_ICRS")

. color [ MName "plx"

, MmType Quantitative

, MScale [ SType ScLog

, SScheme "viridis" []

]

, MLegend [ LTitle "parallax" ]

]

. tooltip [ TName "Cluster", TmType Nominal ]

stars = asSpec [ gaiaData, trans [], enc [], mark Circle [] ]

grats = asSpec [ graticule [ GrStep (60, 15) ]

, mark Geoshape [ MStroke "grey"

, MStrokeOpacity 0.5

, MStrokeWidth 0.5

]

]

grats0 = asSpec [ graticule [ GrStep (180, 90)

]

, mark Geoshape [ ]

]

raData = dataFromColumns []

. dataColumn "x" (Numbers [ -120, -60, 60, 120 ])

. dataColumn "y" (Numbers [ 0, 0, 0, 0 ])

. dataColumn "lbl" (Strings [ "16h", "20h", "4h", "8h" ])

decData = dataFromColumns []

. dataColumn "x" (Numbers [ 0, 0 ])

. dataColumn "y" (Numbers [ -45, 45 ])

. dataColumn "lbl" (Strings [ "-45", "45" ])

encLabels = encoding

. position Longitude (axOpts "x")

. position Latitude (axOpts "y")

. text [ TName "lbl", TmType Nominal ]

raLabels = asSpec [ raData []

, encLabels []

, mark Text [ MAlign AlignCenter

, MBaseline AlignTop

, MdY 5

]

]

decLabels = asSpec [ decData []

, encLabels []

, mark Text [ MAlign AlignRight

, MBaseline AlignMiddle

, MdX (-5)

]

]

in toVegaLite [ width 400

, height 350

, projection [ PrType Mercator ]

, layer [ grats, grats0, stars, raLabels, decLabels ]

]

The layers are drawn in the order they are specified, which is why the grid lines are drawn under the data (and labels).

You can see the distortion in this particular projection (the

Mercator projection),

as the spacing between the latitude lines increases as you move towards the

bottom and top of the plot. There are a number of other projections you

can chose from, such as the Orthographic projection I use in

concatenatedSkyPlot.

Concatenating views

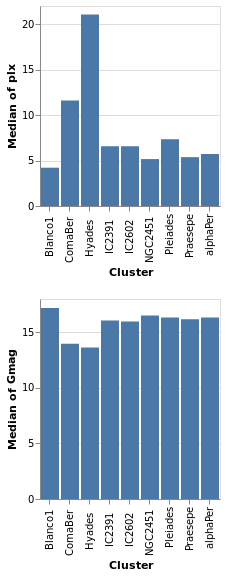

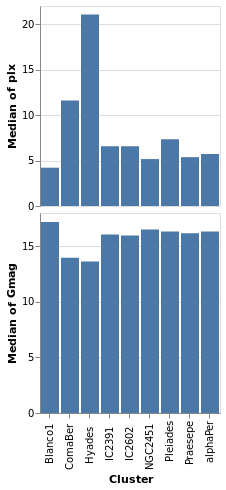

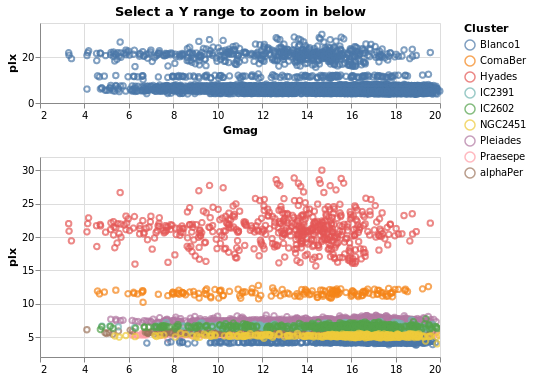

concatenatedPlot :: VegaLite Source #

Instead of layering one view on top of another (superposition), we can place them side by side in a row or column (juxtaposition). In Vega-Lite this is referred to as concatenation:

Open this visualization in the Vega Editor

let enc field = encoding

. position X [ PName "Cluster", PmType Nominal ]

. position Y [ PName field, PmType Quantitative, PAggregate Median ]

parallaxes = [ mark Bar [], enc "plx" [] ]

magnitudes = [ mark Bar [], enc "Gmag" [] ]

specs = map asSpec [ parallaxes, magnitudes ]

in toVegaLite

[ gaiaData

, vConcat specs

]

The hConcat function would align the two plots horizontally,

rather than vertically (and is used in concatenatedSkyPlot).

Note that as the axes are identical apart from the field for the y axis, the encoding has been moved into a function to enforce this constraint (this ensures the x axis is the same, which makes it easier to visually compare the two plots). However, there is no requirement that the two plots be "compatible" (they could use different data sources).

concatenatedPlot2 :: VegaLite Source #

The alignment of the plots can be adjusted with spacing, which we

use here to remove the vertical gap between the two plots (the

example is written so that we can see the only difference between

the two plot specifications is the addition of PAxis []

Open this visualization in the Vega Editor

let enc field flag = encoding

. position X ([ PName "Cluster", PmType Nominal ] ++

if flag then [ PAxis [] ] else [])

. position Y [ PName field, PmType Quantitative, PAggregate Median ]

parallaxes = [ mark Bar [], enc "plx" True [] ]

magnitudes = [ mark Bar [], enc "Gmag" False [] ]

specs = map asSpec [ parallaxes, magnitudes ]

in toVegaLite

[ gaiaData

, spacing 0

, vConcat specs

]

Even though we set spacing to 0 there is still a small gap between

the plots: this can be removed by using bounds Flush

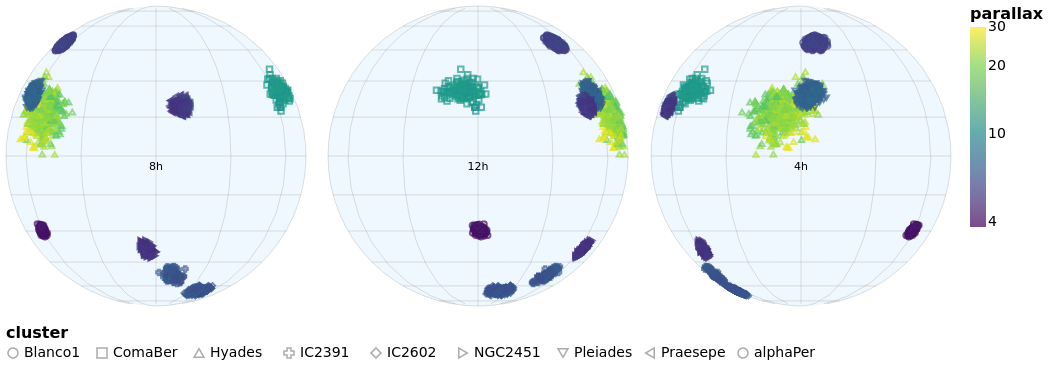

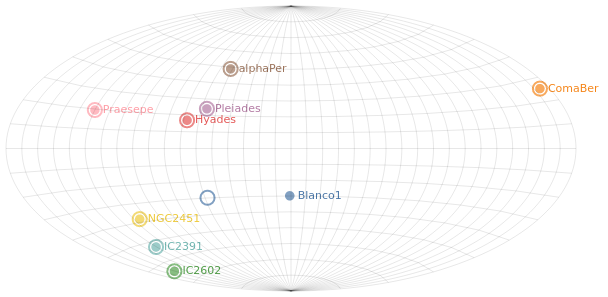

concatenatedSkyPlot :: VegaLite Source #

In skyPlotWithGraticules I used the Mercator projection to display

the stars on the sky, but promised I would also show you data using the

Orthographic projection.

The main specification (that is, the argument of toVegaLite) starts

with a change to the plot defaults, using configure to ensure

that no border is drawn around the plot (note that in combinedPlot

I do the same thing, but by setting the stroke color to

Just "transparent" rather than Nothing). The default data

stream is set up, to ensure we have "longitude" and

"DE_ICRS" values to display. It then has three

versions of the same visualization, varying only on rotation angle and

label, stacked horizontally with hConcat.

Each plot - created with the rSpec helper function - defines

a plot size, uses the Orthographic projection with the

given rotation (the lambda term of PrRotate) to change the

center of the display, and then the plot itself is formed from

four layers:

sphereis used to indicate the area of the plot covered by the sky (filled with a blue variant);- graticules are drawn at every 30 degrees (longitude, so 2 hours in Right Ascension) and 15 degrees (latitude);

- the stars are drawn using color to encode the parallax of the star and the symbol shape the cluster membership (although the density of points is such that it can be hard to make the shapes out);

- and a label is added at the center of the plot to indicate the Right Ascension (the label could be determined automatically from the rotation angle, but it was easier to just specify it directly).

Since the data values have two different encodings - color and shape -

there are two legends added. I place them in different locations using

LOrient: the parallax goes to the right of the plots (which is the

default) and the symbol shapes to the bottom. Both use larger-than-default

font sizes for the text (title and label).

let trans = transform

. calculateAs

"datum.RA_ICRS > 180 ? datum.RA_ICRS - 360 : datum.RA_ICRS"

"longitude"

axOpts field = [ PName field, PmType Quantitative ]

legend ttl o = MLegend [ LTitle ttl

, LOrient o

, LTitleFontSize 16

, LLabelFontSize 14

]

enc = encoding

. position Longitude (axOpts "longitude")

. position Latitude (axOpts "DE_ICRS")

. color [ MName "plx"

, MmType Quantitative

, MScale [ SType ScLog

, SScheme "viridis" []

]

, legend "parallax" LORight

]

. shape [ MName "Cluster"

, MmType Nominal

, legend "cluster" LOBottom

]

. tooltip [ TName "Cluster", TmType Nominal ]

stars = asSpec [ enc [], mark Point [] ]

grats = asSpec [ graticule [ GrStepMinor (30, 15) ]

, mark Geoshape [ MStroke "grey"

, MStrokeOpacity 0.5

, MStrokeWidth 0.5

]

]

lblData r h0 =

let r0 = -r

lbl = h0 <> "h"

in dataFromColumns []

. dataColumn "x" (Numbers [ r0 ])

. dataColumn "y" (Numbers [ 0 ])

. dataColumn "lbl" (Strings [ lbl ])

encLabels = encoding

. position Longitude (axOpts "x")

. position Latitude (axOpts "y")

. text [ TName "lbl", TmType Nominal ]

labels r h0 = asSpec [ lblData r h0 []

, encLabels []

, mark Text [ MAlign AlignCenter

, MBaseline AlignTop

, MdY 5

]

]

bg = asSpec [ sphere, mark Geoshape [ MFill "aliceblue" ] ]

rSpec r h0 = asSpec [ width 300

, height 300

, projection [ PrType Orthographic

, PrRotate r 0 0

]

, layer [ bg, grats, stars, labels r h0 ]

]

s1 = rSpec (-120) "8"

s2 = rSpec 0 "12"

s3 = rSpec 120 "4"

setup = configure . configuration (ViewStyle [ ViewNoStroke ])

in toVegaLite [ setup []

, gaiaData

, trans []

, hConcat [ s1, s2, s3 ] ]

Repeated views

Creating the same plot but with a different field is common-enough

that Vega-Lite provides the repeat operator.

Varying fields field

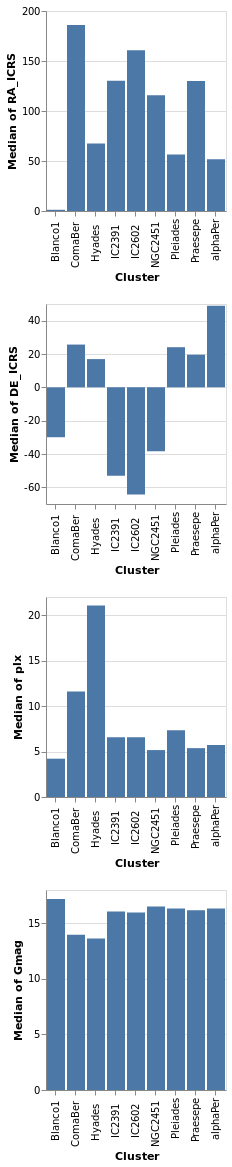

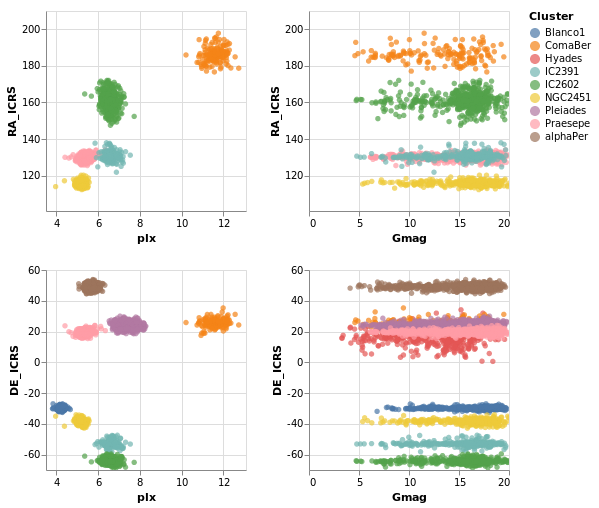

repeatPlot :: VegaLite Source #

The concatenatedPlot example can be extended to view the

distribution of several fields - in this case Right Ascension,

Declination, parallax, and magnitude:

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PName "Cluster", PmType Nominal ]

. position Y [ PRepeat Row, PmType Quantitative, PAggregate Median ]

spec = asSpec [ gaiaData

, mark Bar []

, enc [] ]

rows = [ "RA_ICRS", "DE_ICRS", "plx", "Gmag" ]

in toVegaLite

[ repeat [ RowFields rows ]

, specification spec

]

This more compact specification replaces the data field name

(for example PName "plx"PRepeat) either as a Row or Column depending on the desired

layout. We then compose the specifications by providing a set of

RowFields (or ColumnFields) containing a list of the fields to which

we wish to apply the specification (identified with the function

specification which should follow the repeat function provided to

toVegaLite).

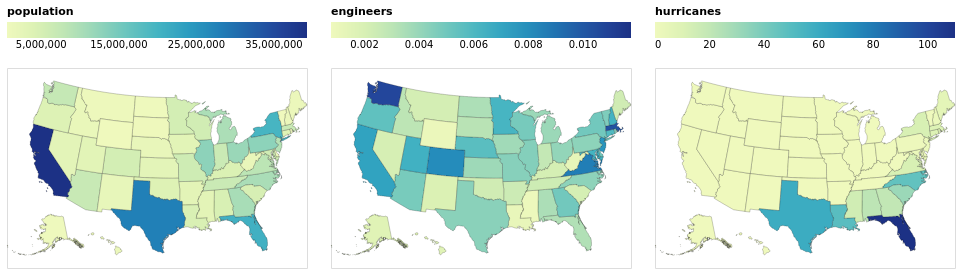

Repeating Choropleths

choroplethLookupFromGeo :: VegaLite Source #

If we want to plot more than one map from the same table of data

we need to do the lookup in the other order, using lookup to add the

geographic data to the data table. Charting this way requires

specifiying a few things differently than in the previous

choropleth example (choroplethLookupToGeo):

- We're using

LuAsinlookup, rather thanLuFields, which lets us use all the fields (columns) in the source rather than a specified subset. - We use a different set of geographic features (state rather than county

outlines) from

usGeoData. - The plot is defined as a

specification, but does not directly refer to the value being displayed. This is set "externally" with the call torepeat. Since we have just had an example withRowFields, this time we useColumnFieldsto stack the maps horizontally. - Since the different fields have vastly-different ranges (a maximum of

roughly 0.01 for "engineers" whereas the "population" field is

a billion times larger), the color scaling is set to vary per field

with

resolve.

Open this visualization in the Vega Editor

let popEngHurrData = dataFromUrl "https://raw.githubusercontent.com/vega/vega/master/docs/data/population_engineers_hurricanes.csv" []

plotWidth = 300

viz = [ popEngHurrData

, width plotWidth

, transform

. lookup "id" (usGeoData "states") "id" (LuAs "geo")

$ []

, projection [PrType AlbersUsa]

, encoding

. shape [MName "geo", MmType GeoFeature]

. color [MRepeat Column, MmType Quantitative, MLegend [LOrient LOTop, LGradientLength plotWidth]]

$ []

, mark Geoshape [MStroke "black", MStrokeOpacity 0.2]

]

in toVegaLite

[ specification $ asSpec viz

, resolve

. resolution (RScale [(ChColor, Independent)])

$ []

, repeat [ColumnFields ["population", "engineers", "hurricanes"]]

]

By moving the legend to the top of each visualization, I have taken

advantage of the fixed with (here 300 pixels) to ensure the

color bar uses the full width (with LGradientLength).

Rows and Columns

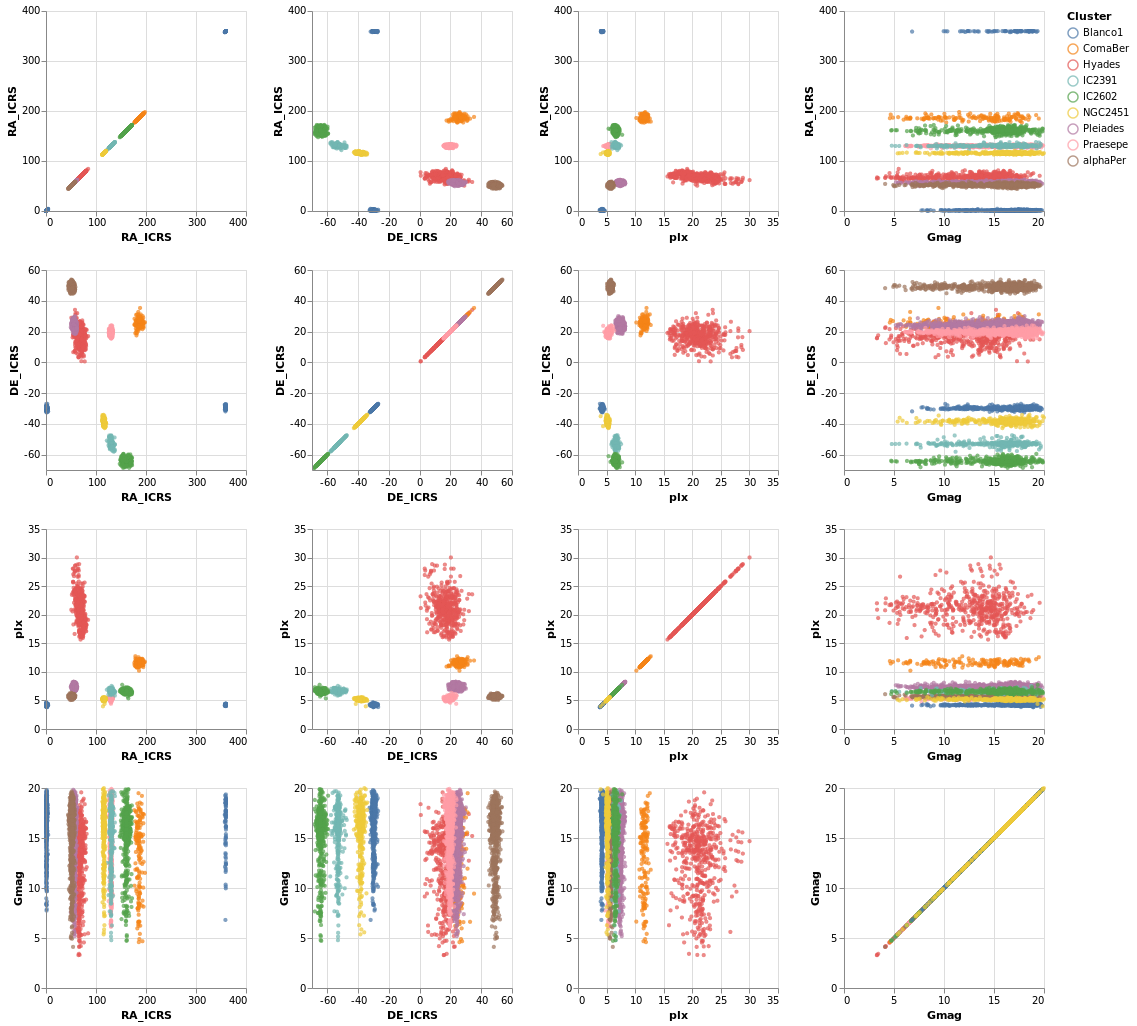

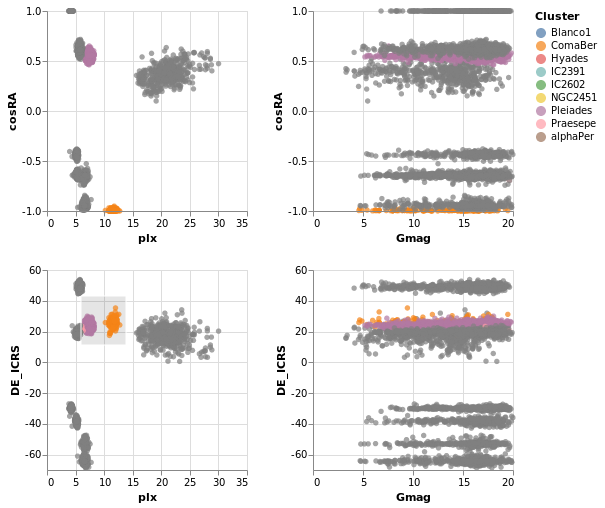

splomPlot :: VegaLite Source #

We can combine repeated rows and columns to create a grid of views, such as a scatterplot matrix, adding in color encoding to separate out the clusters:

Open this visualization in the Vega Editor

let enc = encoding

. position X [ PRepeat Column, PmType Quantitative ]

. position Y [ PRepeat Row, PmType Quantitative ]

. color [ MName "Cluster", MmType Nominal ]

spec = asSpec [ gaiaData

, mark Point [ MSize 5 ]

, enc [] ]

fields = [ "RA_ICRS", "DE_ICRS", "plx", "Gmag" ]

in toVegaLite

[ repeat [ RowFields fields, ColumnFields fields ]

, specification spec

]

To be honest, this is not the best dataset to use here, as

there is no direct correlation between location (the RA_ICRS

and DE_ICRS fields) and the other columns, but it's the

dataset I chose, so we are stuck with it.

Once you have sub-plots as a specification, you can combine them horizontally and vertically to make a dashboard style visualization. Interested parties should check out the Building a Dashboard section of the Elm Vega-Lite Walkthrough for more details.

Interactivity

Interaction is enabled by creating selections that may be combined with the kinds of specifications already described. Selections involve three components:

- Events are those actions that trigger the interaction such as clicking at a location on screen or pressing a key.

- Points of interest are the elements of the visualization with which the interaction occurs, such as the set of points selected on a scatterplot.

- Predicates (i.e. Boolean functions) identify whether or not something is included in the selection. These need not be limited to only those parts of the visualization directly selected through interaction.

Arguments

| :: Text | The selection name |

| -> Text | The title for the plot |

| -> [PropertySpec] |

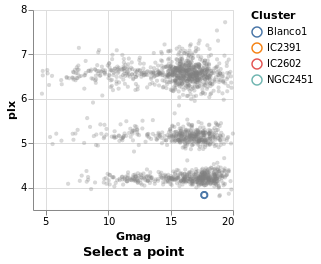

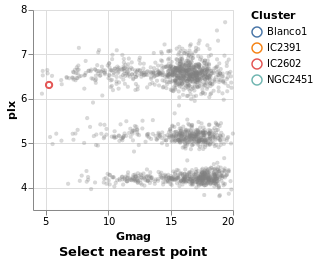

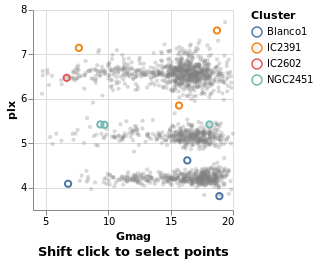

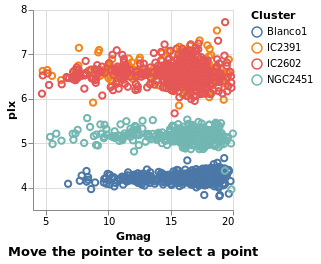

The next several plots show different types of selection - select a single point, a range of plots, or follow the mouse - and all have the same basic structure. To avoid repetition, and mistakes, I am going to introduce a helper function which creates the plot structure but without the selection definition, and then use that to build up the plots.

The helper function, selectionProperties, takes two arguments, which are

the selection name and the plot title. The selection name is used